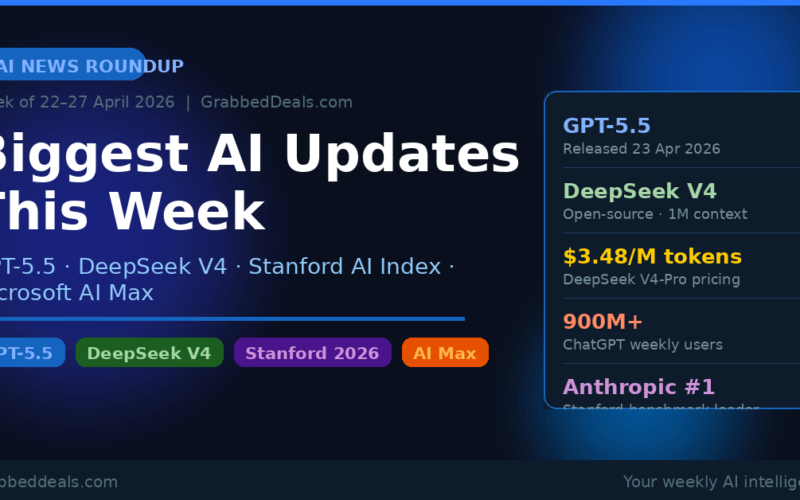

Key Takeaways

- OpenAI released GPT-5.5 on 23 April, calling it its “smartest and most intuitive” model yet, with strong gains in agentic coding and computer use

- DeepSeek dropped V4-Pro and V4-Flash on 24 April: open-source, 1 million token context, and priced far below every Western frontier model

- Stanford’s 2026 AI Index confirms AI adoption is now faster than the PC or internet era, with Anthropic leading the benchmark leaderboard as of March 2026

- Microsoft launched AI Max for Search, shifting ad strategy from clicks to AI-driven selection

- The US-China AI race tightened again this week, with the White House making fresh IP theft allegations against Chinese labs

Who is this for? Anyone who wants to stay current on AI without spending hours reading every tech outlet. This weekly roundup covers only what actually mattered, with context on why it matters to you.

OpenAI releases GPT-5.5

The biggest single AI story this week came from OpenAI. On Thursday 23 April, the company released GPT-5.5, just six weeks after GPT-5.4 shipped. That release cadence alone tells you how fast the frontier is moving right now.

OpenAI president Greg Brockman described the new model as a step toward “more agentic and intuitive computing.” The company calls it its smartest and most intuitive model to date, and the benchmark data backs that claim up in a few specific areas.

GPT-5.5 delivers its biggest gains in agentic coding, computer use, scientific research, and document creation. It performs the same tasks as GPT-5.4 but with fewer tokens, which matters a lot if you are building applications on the API. OpenAI says it matches GPT-5.4’s per-token latency in real-world serving while outperforming it across nearly every benchmark category.

For subscribers, GPT-5.5 is rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex immediately. GPT-5.5 Pro is also live for Pro, Business, and Enterprise users. Free users do not get access. The API rolled out on 24 April, one day after the public launch.

One detail worth noting: GPT-5.5’s codename is “Spud.” Slightly less dramatic than the press release language, but at least it is memorable.

The model also arrived with stronger safety testing than any previous release. OpenAI brought in external red teamers and ran extended testing for cybersecurity and biology risks, partly in response to the attention Anthropic’s Mythos model drew for its advanced cyber capabilities earlier in April.

Why this matters to you: If you use ChatGPT for coding, research, or document work, this is a meaningful upgrade. If you build on the OpenAI API, the token efficiency gain is real money over time.

External link: Read OpenAI’s full GPT-5.5 announcement

DeepSeek V4 arrives and changes the price conversation

One day after GPT-5.5 dropped, China’s DeepSeek released V4. The timing was not accidental, and the message was clear: the open-source world is keeping up.

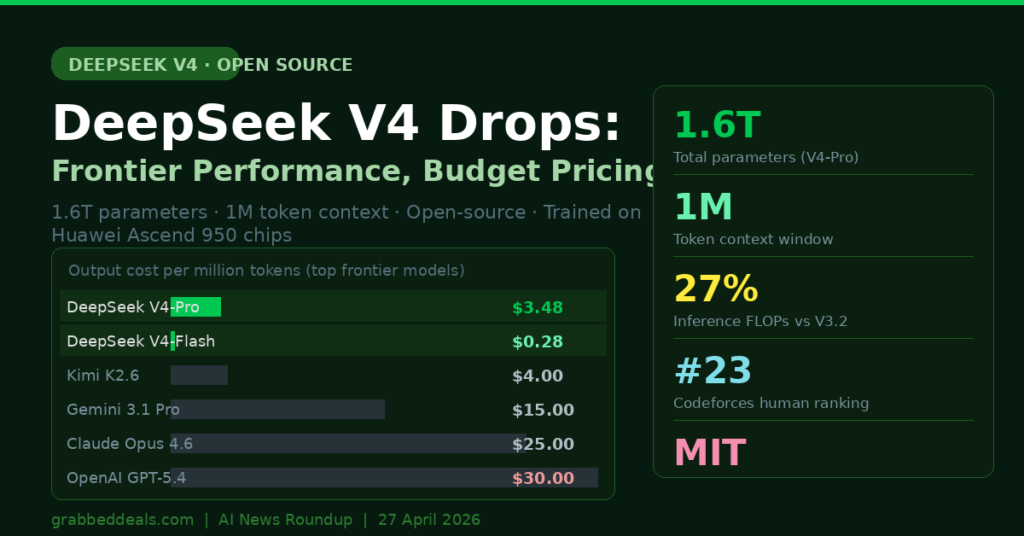

DeepSeek launched two models on 24 April: V4-Pro and V4-Flash. V4-Pro carries 1.6 trillion total parameters with 49 billion active per token, making it the largest open-weight model in the world right now. V4-Flash is the lighter sibling at 284 billion total and 13 billion active parameters. Both models support a 1 million token context window and are available on Hugging Face under an MIT licence.

The headline claim from DeepSeek is efficiency. At 1 million tokens of context, V4-Pro uses only 27% of the inference compute that V3.2 required, and just 10% of the KV cache. V4-Flash pushes that further to 10% of the FLOPs and 7% of the cache. That is a remarkable improvement in how much it costs to run a long-context session.

On benchmarks, V4-Pro performs somewhere between GPT-5.2 and GPT-5.4 on standard reasoning tasks. DeepSeek’s own tech report says it trails GPT-5.4 and Gemini 3.1 Pro “by approximately three to six months” in terms of capability. That is actually a bold and honest statement, and it frames V4-Pro as a fast follower rather than a leapfrog.

Where V4-Pro does lead every other model, open or closed, is coding. It achieves a 3,206 Codeforces rating, placing it roughly 23rd among human competitive programmers. That is not a benchmark number: that is a real standing in a real competition.

The price story is the most striking part. V4-Pro costs $1.74 per million input tokens and $3.48 per million output tokens. For comparison, OpenAI charges $30 and Anthropic charges $25 for the same volume of output with their top models. Even DeepSeek’s own V4-Flash is cheaper than OpenAI’s nano tier.

DeepSeek also confirmed that V4 was trained on Huawei’s Ascend 950 chips, a deliberate signal that China can now build frontier AI models on domestic hardware without relying on Nvidia GPUs subject to export controls.

External link: Read the full DeepSeek V4 technical report on Hugging Face

Stanford’s 2026 AI Index: what the data says

The Stanford AI Index for 2026 dropped this week, and it is the clearest single-document snapshot of where AI actually stands right now.

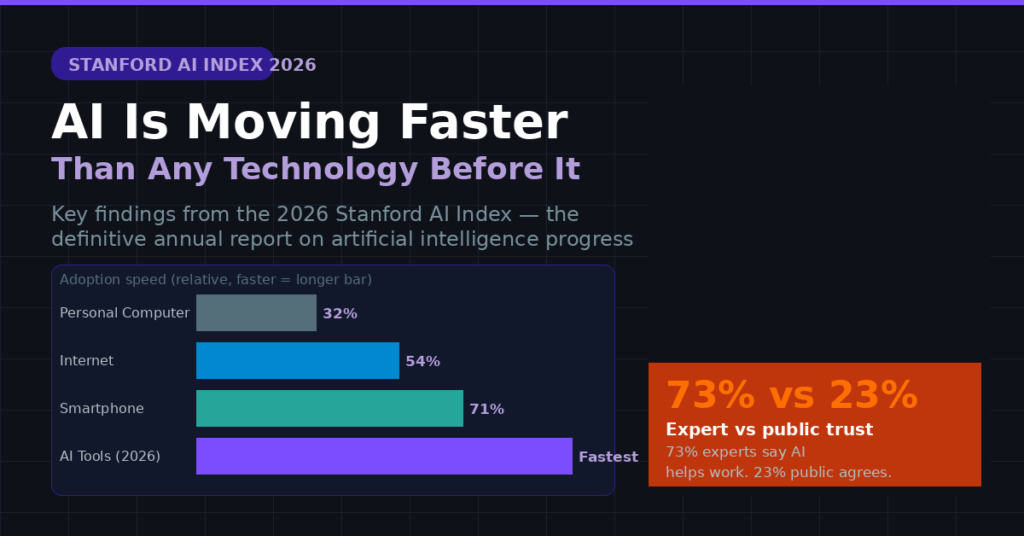

The headline finding: AI adoption is moving faster than any previous general-purpose technology. People are adopting AI tools quicker than they adopted the personal computer or the internet. That is not editorial hype; it is what the adoption curve data shows.

On the model side, the report confirms that as of March 2026, Anthropic leads the benchmark leaderboard, with xAI, Google, and OpenAI close behind. Chinese models from DeepSeek and Alibaba lag “only modestly,” which is a striking change from where things stood 18 months ago.

The report also makes a pointed observation about disclosure. As competition has intensified, the leading AI companies have stopped publishing their training code, parameter counts, and dataset sizes. You now know far less about the models you are using than you did two years ago.

One number that stands out: the US has an estimated 5,427 data centres, more than ten times as many as any other country. China leads in AI research publications and patents. The two nations have different AI advantages, and neither is pulling decisively ahead across every dimension.

The index also surfaced a trust gap worth paying attention to. 73% of AI experts believe AI will have a positive impact on how people do their jobs. Only 23% of the American public agrees. That gap does not close by itself; it requires communication and demonstrated usefulness from AI products over time.

Microsoft bets on the agentic web with AI Max

Microsoft made several significant advertising and AI announcements this week that together suggest where the company thinks the web is heading.

The centrepiece is AI Max for Search. This new product expands query matching and personalises ads across both Copilot and Bing, designed for a world where AI agents are increasingly involved in how people discover and buy things. The core idea is that optimising for clicks is the wrong framework when an AI is deciding what to show a user. You need your content to be chosen by the AI, not just ranked in search results.

Microsoft also introduced Offer Highlights, which surfaces key product selling points directly inside AI conversations. Alongside this, the company released tools to track AI visibility and enable structured commerce for agent transactions, including the ability to support in-chat purchases.

The shift in language from “clicks” to “AI selection” is worth taking seriously. If search traffic increasingly flows through AI intermediaries, every business that depends on Google or Bing traffic needs to think about how its content, product listings, and metadata reads to an AI agent, not just to a human searcher.

External link: Microsoft’s full AI Max announcement on the Microsoft Advertising blog

The US-China AI conflict escalates

This week saw a fresh round of public accusations between the US and China over AI intellectual property.

On the day before DeepSeek V4 launched, the White House formally accused Chinese AI firms, including DeepSeek, of conducting model extraction attacks on US labs at scale. The accusation describes a method where thousands of proxy accounts send queries to frontier models, collect the responses, and use those responses to train smaller models to replicate the reasoning patterns of the larger one. OpenAI, Anthropic, and Google have all warned about this practice in 2026, calling it “illicit distillation.”

China’s foreign ministry called the claims “groundless” and described them as a smear against Chinese AI research.

The context matters here. DeepSeek V4 runs on Huawei’s domestically manufactured Ascend chips, which is a direct response to US export restrictions on Nvidia GPUs. Semiconductor Manufacturing International Corp, the Chinese chipmaker that makes Huawei’s processors, saw its Hong Kong shares jump 10% the day V4 launched, which tells you what investors think this milestone means.

The Stanford AI report this week also confirmed that Chinese labs have now “effectively closed” the AI performance gap with US rivals, at least on benchmarks. Whether that came through original research or more questionable means is exactly what the ongoing policy dispute is about.

Agentic AI moves from demo to production

Beyond the individual model releases, the underlying theme of this week was that agentic AI has genuinely crossed from demo to deployment.

DeepSeek V4 specifically highlights agentic coding as its leading benchmark category, scoring an open-source best on LiveCodeBench. OpenAI’s marketing for GPT-5.5 centres on its ability to handle “messy, multi-part tasks” without step-by-step guidance. Microsoft’s AI Max is built around agents making purchasing decisions on behalf of users.

MIT Technology Review published a piece this week noting that the next stage of agentic AI involves teams of agents cooperating on complex goals, not just single agents completing individual tasks. The shift from solo agent to coordinated multi-agent workflows is where the real productivity gains are expected to come from in the second half of 2026.

For anyone building software or products right now, the practical implication is this: the competitive moat is no longer the ability to build. AI agents can now write code, test it, deploy it, and market it. The moat is now distribution and judgement, who you serve and what you decide to build, not whether you can technically build it.

What to watch next week

A few things worth keeping an eye on in the week ahead:

Grok 5: xAI has been signalling a Q2 2026 release for Grok 5, rumoured at 6 trillion parameters. If it arrives next week, it will be the single largest model release in xAI’s history.

DeepSeek V4 independent benchmarks: DeepSeek’s self-reported numbers are good, but independent evaluations often tell a different story. Watch for community-run benchmarks on Hugging Face and LLMStats over the coming days.

OpenAI’s IPO preparations: Multiple reports this week confirmed OpenAI is making early steps toward a public listing, potentially as soon as late 2026. The company surpassed $25 billion in annualised revenue and has over 900 million weekly active ChatGPT users. Watch for more financial disclosures as an IPO gets closer.

Apple’s Siri overhaul: Apple confirmed earlier in April that a fundamentally rebuilt, Gemini-powered Siri is targeting a 2026 launch. No firm date yet, but the next major iOS update is where this is expected to land.

FAQ

What is GPT-5.5 and how is it different from GPT-5.4? GPT-5.5 is OpenAI’s latest frontier model, released on 23 April 2026. The key differences from GPT-5.4 are stronger performance in agentic coding, computer use, and scientific research, combined with better token efficiency. OpenAI says GPT-5.5 completes the same tasks with fewer tokens than GPT-5.4, which means better results for the same or lower cost. It also arrived with stronger safety testing for cybersecurity and biology risks.

Is DeepSeek V4 really better than GPT-5.4? Not overall. DeepSeek’s own technical report says V4-Pro trails GPT-5.4 and Gemini 3.1 Pro by approximately three to six months in capability. Where V4 genuinely leads is in coding benchmarks, long-context efficiency, and price. At $3.48 per million output tokens versus OpenAI’s $30, V4-Pro is a serious option for developers building applications at scale who do not need absolute top performance.

What is the Stanford AI Index 2026 and why does it matter? The Stanford AI Index is an annual report from Stanford University’s Institute for Human-Centered AI that measures AI progress across research, deployment, economic impact, and public perception. It is one of the most cited independent assessments of the AI industry. The 2026 edition confirms that AI adoption is outpacing every previous technology wave, that Chinese labs have nearly closed the performance gap with US rivals, and that public trust in AI remains far lower than expert optimism would suggest.

What does Microsoft’s AI Max for Search mean for businesses? AI Max is Microsoft’s acknowledgment that search is shifting from a model where humans click on results to one where AI agents select and present information on users’ behalf. For businesses that rely on search traffic, this means the way you structure your product information, content, and metadata needs to be understandable to an AI, not just to a human reader. Optimising for AI selection is becoming as important as traditional SEO.

What is model distillation and why is the US government concerned about it? Model distillation (also called model extraction) is a technique where you train a smaller, cheaper model by feeding a larger model thousands of queries and using its responses as training data. The result is a smaller model that mimics the reasoning patterns of the larger one, without requiring the compute or data to train from scratch. The US government’s concern is that Chinese AI labs may be using this method against closed frontier models like GPT and Claude to replicate their capabilities, which would bypass US export restrictions and IP protections.

Author bio: The GrabbedDeals editorial team covers AI tools, tech news, and buying guides for UK, US, and European readers. Our weekly AI roundup is published every Sunday morning so you start your week informed.

Related Posts

- What Is Agentic AI? A Plain-English Guide for 2026

- 5 AI Agent Tools Everyone Is Talking About in 2026

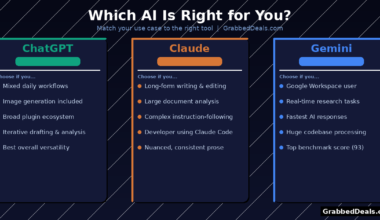

- ChatGPT vs Claude vs Gemini: Which AI Is Actually Best in 2026?

Explore our more pages – AI Tools & Productivity | Smartphone Ecosystems | Laptop & PCs | Audio & Sound Systems | Gaming | Photography & Videography | EV & Green Tech | Digital Marketing & SEO | Smart Home & Wearables | Tech Deals & Buying Guides |